Modeling and Simulating Eye Oculomotor Behavior to Support Retina Implant Development

By Ra’anan Gefen and Leonid Yanovitz, Nano-Retina Inc.

Age-related macular degeneration, the leading cause of vision loss in North America, affects the retina, the light-sensitive layer of tissue lining the inside of the eye. The retina acts as a photoreceptor for light passing through the cornea and the eye’s optic system. Until recently, vision loss stemming from a damaged retina was irreversible.

At Nano-Retina, we are developing Bio-Retina, an artificial retina designed to restore functional vision to patients with retinal degenerative diseases. Measuring just 3mm x 4mm and completely self-contained, Bio-Retina will be implanted in the eye via a minimally invasive procedure (Figure 1).

Bio-Retina transforms light received through the eye’s optical system into electrical signals that stimulate the neurons that would be stimulated by a healthy retina. The first-generation device, capable of representing images that have about 600 pixels, will restore 20/200 vision. We anticipate that the second-generation Bio-Retina will provide much higher spatial resolutions (Figure 2).

Before committing to hardware, we needed to verify our design by testing it under everyday scenarios, such as riding in a car, watching television, or simply walking down a hallway. For this purpose we created a system model in MATLAB® and Simulink® incorporating the eye’s optics and movements, environmental factors, and Bio-Retina’s analog and digital subsystems. By simulating the model under multiple scenarios, we gained much deeper insights into the system behavior than we could have obtained by running separate simulations on individual components or design elements.

Modeling Eye Optics and Movement

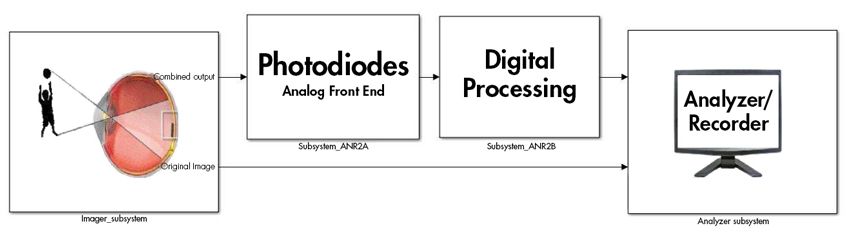

Our complete Simulink model includes a plant model of the imager (the eye oculomotor and optical behavior); a model of the analog front end, which includes Bio-Retina's photodiodes; and the digital processing subsystem, which generates the signals used to stimulate neural activity (Figure 3). The model also includes an analyzer subsystem block, which we use to evaluate the neural stimulus signals.

The system analog and digital models enable us to run extensive simulations of our system before implementing our design in silicon. Other programs are available for simulating both these models, but the Simulink environment gives us the versatility and flexibility to combine the models with other nonelectrical subsystems, such as the human eye, environmental conditions, and human perception of the stimulated pattern.

The imager model captures all the biological and environmental factors that affect the light hitting the photodiodes. Among the most important of these factors is the movement of the eye. In normal situations our eyes are constantly scanning the objects in front of us with voluntary movements known as saccades. Even when our gaze is fixed upon a single object, the eye exhibits small, involuntary movements known as microsaccades. This behavior can be captured by looking at a face and recording the eye movements (Figure 4).

Such movements can affect the processing unit evoking frequencies, some of which we want to keep, and others to suppress. Our model accounts for these movements as well as for larger movements of the eye that occur naturally when a person glances around, allowing thorough characterization of how the implant manages these movements. In addition to movement effects, the imager model captures the subtle changes in light reaching the retina caused by blinking, optical conditions like myopia (nearsightedness), and other characteristics of the eye’s optical system.

A variety of environmental conditions that affect light before it reaches the eye are also modeled in Simulink. These conditions include the flicker of fluorescent lights, the frame rates of movies and television shows, the refresh rates of LED displays, and wide ranges of light intensity (between moonlight and bright sunlight, for example).

Simulation and Verification

Simulations showed us how various combinations of environmental conditions, eye movements, and system parameters affect the overall performance, allowing us to better understand Bio-Retina’s capabilities and limitations. Simulations also enabled us to test the system’s performance under a practically limitless number of usage scenarios.

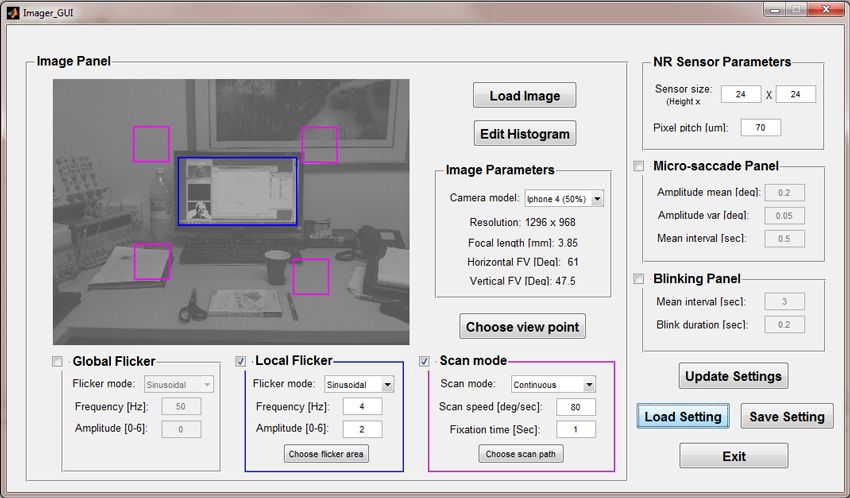

We developed an interface in MATLAB to make it easier to configure parameters for simulation runs and automate the data collection and processing (Figure 5).

During simulations we provide still images or image streams to the imager subsystem. The output of the imager subsystem is processed by the analog subsystem. The output of the analog subsystem is then processed by the digital processing subsystem to generate appropriate neural stimulus signals.

To assess the way an implant recipient might perceive these neural stimulus signals, we rely on the analyzer subsystem, which converts signal pulses back into an image that we can compare with the image originally used as input to the system. As a second verification step, we use the analyzer subsystem’s Simulink model to ensure that the amplitude and frequency of the stimulus signals are within established limits.

Hardware Implementation and Testing

As we work on the second generation of the Bio-Retina implant, we continue to use Simulink to gain insights into the design via system-level simulations of the eye and our device working together. When the second-generation device is ready for test, we will generate test cases from our Simulink simulations and compare the hardware test results with the simulation results to verify hardware implementation and improve future simulated models.

Published 2014 - 92214v00