batchnorm

Normalize data across all observations for each channel independently

Syntax

Description

The batch normalization operation normalizes the input data

across all observations for each channel independently. To speed up training of the

convolutional neural network and reduce the sensitivity to network initialization, use batch

normalization between convolution and nonlinear operations such as relu.

After normalization, the operation shifts the input by a learnable offset β and scales it by a learnable scale factor γ.

The batchnorm function applies the batch normalization operation to

dlarray data.

Using dlarray objects makes working with high

dimensional data easier by allowing you to label the dimensions. For example, you can label

which dimensions correspond to spatial, time, channel, and batch dimensions using the

"S", "T", "C", and

"B" labels, respectively. For unspecified and other dimensions, use the

"U" label. For dlarray object functions that operate

over particular dimensions, you can specify the dimension labels by formatting the

dlarray object directly, or by using the DataFormat

option.

Note

To apply batch normalization within a dlnetwork object, use batchNormalizationLayer.

Y = batchnorm(X,offset,scaleFactor)X using the

population mean and variance of the input data and the specified offset and scale

factor.

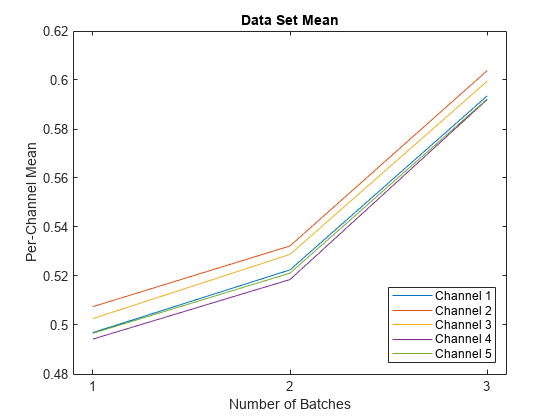

The function normalizes over the 'S' (spatial),

'T' (time), 'B' (batch), and 'U'

(unspecified) dimensions of X for each channel in the

'C' (channel) dimension, independently.

For unformatted input data, use the 'DataFormat'

option.

[

applies the batch normalization operation and also returns the population mean and variance

of the input data Y,popMu,popSigmaSq] = batchnorm(X,offset,scaleFactor)X.

[

applies the batch normalization operation and also returns the updated moving mean and

variance statistics. Y,updatedMu,updatedSigmaSq] = batchnorm(X,offset,scaleFactor,runningMu,runningSigmaSq)runningMu and runningSigmaSq

are the mean and variance values after the previous training iteration, respectively.

Use this syntax to maintain running values for the mean and variance statistics during training. When you have finished training, use the final updated values of the mean and variance for the batch normalization operation during prediction and classification.

Y = batchnorm(X,offset,scaleFactor,trainedMu,trainedSigmaSq)trainedMu and

variance trainedSigmaSq.

Use this syntax during classification and prediction, where

trainedMu and trainedSigmaSq are the final

values of the mean and variance after you have finished training, respectively.

[___] = batchnorm(___,'DataFormat',FMT)

applies the batch normalization operation to unformatted input data with format specified by

FMT using any of the input or output combinations in previous syntaxes.

The output Y is an unformatted dlarray object with

dimensions in the same order as X. For example,

'DataFormat','SSCB' specifies data for 2-D image input with the format

'SSCB' (spatial, spatial, channel, batch).

[___] = batchnorm(___,

specifies additional options using one or more name-value pair arguments. For example,

Name,Value)'MeanDecay',0.3 sets the decay rate of the moving average

computation.