Train a nonlinear autoregressive with external input (NARX) neural network and predict on new time series data. Predicting a sequence of values in a time series is also known as multistep prediction. Closed-loop networks can perform multistep predictions. When external feedback is missing, closed-loop networks can continue to predict by using internal feedback. In NARX prediction, the future values of a time series are predicted from past values of that series, the feedback input, and an external time series.

Load the simple time series prediction data.

Partition the data into training data XTrain and TTrain, and data for prediction XPredict. Use XPredict to perform prediction after you create the closed-loop network.

Create a NARX network. Define the input delays, feedback delays, and size of the hidden layers.

Prepare the time series data using preparets. This function automatically shifts input and target time series by the number of steps needed to fill the initial input and layer delay states.

A recommended practice is to fully create the network in an open loop, and then transform the network to a closed loop for multistep-ahead prediction. Then, the closed-loop network can predict as many future values as you want. If you simulate the neural network in closed-loop mode only, the network can perform as many predictions as the number of time steps in the input series.

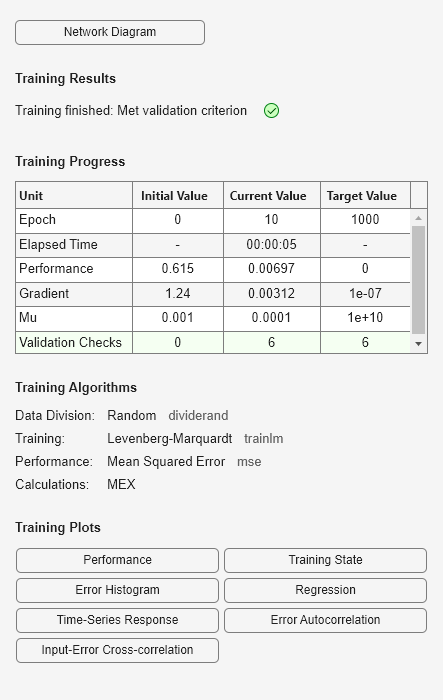

Train the NARX network. The train function trains the network in an open loop (series-parallel architecture), including the validation and testing steps.

Display the trained network.

Calculate the network output Y, final input states Xf, and final layer states Af of the open-loop network from the network input Xs, initial input states Xi, and initial layer states Ai.

Calculate the network performance.

To predict the output for the next 20 time steps, first simulate the network in closed-loop mode. The final input states Xf and layer states Af of the open-loop network net become the initial input states Xic and layer states Aic of the closed-loop network netc.

Display the closed-loop network.

Run the prediction for 20 time steps ahead in closed-loop mode.

Yc=1×20 cell array

{[-0.0156]} {[0.1133]} {[-0.1472]} {[-0.0706]} {[0.0355]} {[-0.2829]} {[0.2047]} {[-0.3809]} {[-0.2836]} {[0.1886]} {[-0.1813]} {[0.1373]} {[0.2189]} {[0.3122]} {[0.2346]} {[-0.0156]} {[0.0724]} {[0.3395]} {[0.1940]} {[0.0757]}