AI Applications in Machine Tools Design and Operation

Dr. Sergei Schurov, GF Machining Solutions

Machine tools remain the major vehicle driving technological progress today. This industry segment is highly influenced by rapid evolution in Industry 4.0 technologies, including machine connectivity, Internet of Things (IoT), manufacturing process simulation, production flow modelling, and cloud-based analytics for predictive maintenance.

Artificial intelligence and machine learning technologies have a special place in the industrial IoT. Driven by increasing manufacturing performance needs together with growing technology complexity on one side and the reducing base of skilled operators combined with shrinking product cycle times and time to market on the other, machine tool makers have to respond in a smart way to meet expectations in productivity gains. This expected change applies both to end users, such as machine operators and workshop managers, and to product development teams of machine tool builders.

As a major industrial player, GF Machining Solutions is at the forefront of integrating machine learning technologies into its products. In this presentation, Dr. Sergei Schurov discusses several applications of such algorithms to the GF Solutions portfolio and will explore the benefits and challenges of bringing them into new system designs while remaining competitive in the market.

Recorded: 23 May 2019

Good morning, everybody. It's great to be here. Three years since the last presentation.

I would like to talk a little bit about how we apply the machine learning, artificial intelligence, and all those nice techniques to the real to deliver the value to our customers. So I call this AI application in machine tools, but I will start with what I read in the news this morning in the train. It came in the news feed.

You can see that artificial intelligence mentions have overtaken blockchain in quarterly earnings calls. Okay, and I thought it was kind of appropriate today because maybe none of us work in the finance, but at least all of us know what derivative is. So that's—okay.

And today, I will talk about AI, transition to industrial reality. But first of all, a few words about the company. Georg Fischer is really a pioneer in the industry. It was founded in 1881 in Switzerland.

And if you remember the history, that was bang in the middle of the Industrial Revolution, number one. And Georg Fischer was both the beneficiary and the product of this Industrial Revolution. So it was one of the first to transition from hand production to the machine tools—to the machine production and to the machine tools later on.

Now we are living in the age of Industry 4.0. But before, there were two other revolutions starting with Henry Ford—well, starting with the 2.0, which brought their assembly lines. And you remember the famous quote by Henry Ford that, you know, you can order any Model T Ford as long as it's black.

And later on to the mass customization, where we are probably right now—

You know from the automotive, you know from your car experience, that you can select and choose any car you like, any specifications. And really, the choice is limitless. As a supplier to the industry, as a provider to the industry solutions, we are offering a wide range of products and technologies. And really, it becomes perplexing and complicated even to select which is the most appropriate technology. So our answer is to offer the system solutions where we can meet the customer expectations for the increasingly fast production and product development cycles.

When I was speaking three years ago at this event in Berlin, I was asking the question, so what's come next? And I was saying that perhaps in the framework of the Industry 4.0, we need to be looking at the smart factories, at the self-learning machines, and the intelligent machines. So now we are—we're here. So what has happened in the previous three years that have passed?

Well, I said already that fourth Industrial Revolution is here with us. Well, okay, we had some introductions about what it is all about. And we know that fourth Industrial Revolution, Industry 4.0, brings the connectivity.

But it's not the only thing that it brings. It is really bringing transformation to the entire industry, manufacturing industry, value chain. What I mean by this—we heard earlier about agile. Agile is everywhere.

Agile is helping to accelerate the development cycles to offer the faster time from the idea to the concept and offer multiple iterations. This is the agile. Another tool with that we heard earlier about in the presentation from Andrew is about design thinking.

Design thinking is connecting engineers with customers. How often have you heard the stories that, you know, you develop a wonderful product and you bring it out to the market say, well, why did you do this? Do I really need it? So this is where design thinking comes in. It allows really to get the early customer feedback based on the early prototype, validate, iterate, come back to the design board, repeat, and really in quick succession.

And all this becomes possible through the use of modeling and simulation, which is where the MathWorks and MATLAB stands right now with a Simulink, with a Stateflow state, right in the middle. The mode order reduction techniques, they cut down the simulation times. The machine learning and the artificial intelligence bring the power to the models with the edge or cloud-based computing tools. You can often make the choice between the two.

And really, what is significant is that all this is driving down the development cycle time. In the past, I mean, Georg Fischer is a brick-and-mortar company. We have started with a metal production. We started with the piping production. We started with machine tool, continued with machine tool production.

Now we're turning into the software company. And this is really a significant paradigm shift. And as you see here, the software is not only about the technology. It is bringing the change in the way we work, so the cycles, product development cycles, are no longer driven as much by the mechanical prototyping, with its lengthy iterative cycles. But it's becoming driven by the models.

And I think this is a really important point to retain from this talk. Now let us have a look at some of the building blocks that we use in our development of our products and solutions. So there is a range of technologies where the AI NML can be applied. And we say that we really apply this throughout the entire value chain, production and product lifecycle, product value chain—So starting with the development through the product operation, in service, in customer, and in the maintenance phase when we can help the customer to ensure the continued reliability and availability of the product. So AI brings value across the entire manufacturing value chain.

Few more words about the company—so as you heard, we offer the wide range of products starting from milling, electro discharge machinings, and laser, which are already nonconventional technologies; additive manufacturing, which is even further non-conventional. And all is complemented by the tooling and automation and with the injection now of the digital thinking of the digital transformation, the Industry 4.0. I mentioned Georg Fischer was founded in 1882, and it is a Swiss-based company with around 15,000 employees at the end of last year and 4 1/2 billion revenue in Swiss francs.

We have—we're present pretty much all over the world in 50 plus countries with 140 sales companies and 57 production plants. And there are three divisions: the piping, casting solutions, and machining solutions. So today, I represent the GF machining solutions. And we are, as a machining solutions, as a company, we consider ourselves leaders in the precision machine tools, automation solutions, and customer services for the production of value-added components, value-added parts, again, with a global presence and headquarters in Switzerland.

So now back to the slide about our technologies and solutions—so I mentioned development operation, maintenance. And let us have a look at some of the examples starting with operations. Here we can speak about—here I can speak about the make artificial intelligence for zero-defect manufacturing. Important topic—when you buy something, you want to make sure that it works.

And the example I show here is the electro erosion machine. And a few words before I go into technical details, a few words about the technology that you might not be familiar with. EDM is a preferred technology for the tough materials. Why?

Because it is working by electro erosion. Means that you remove small chunks of materials, small chunks of material, by producing electrical spark. And the benefit of the process is that it is insensitive to how hard the material is. Means you can work with the hardest material in the world as long as it is conductive. And the wire is following the CNC path. And you can choose—you can follow any shape or any track that you choose in your CAM and produce the desired result or desired output.

So far, so good. However, the process is fairly complex. And if it is not well controlled, it can produce the defects. As you can see here, those two small lines, small as they appear, they can act as a concentration of stress. And this can lead to some unfortunate events like parts failure in service parts failure.

Clearly, you want to avoid this, and the process abnormalities must be dealt with in advance. The good news is that EDM process is fully monitored process. We know exactly what is happening at every moment in time in terms of current, in terms of voltage, in terms of the access position on the machine. We know everything.

But if you try to go through the direct analysis, you won't get far because what you can see here, for example, all these are the pulses which are valid pulses and that they may occur during the normal erosion process. These are valid—not necessarily what you desire to have, but they will not necessarily produce the defects. So the normal pulse, the reduced pulse, even the open circuit—this is not synonymous with a defect.

What you need, what we did, was to group those pulses or other features from the current and from the voltage into certain categories. And then we applied the machine learning using the analysis split from the 50 sample sets, which were split into training set and to the test set, then applied the three-layer feet forward backward propagation neural network and came up with the results correlating the predicted data with the measured data, with the control data. What you can see here is that some of the—there is a point you can see here that the results which show the most optimum correlation are not necessarily correspond to the highest number of the nodes.

So 21 node was 97%, and six nodes was 100%. How come that? So the challenge for the engineers was to optimize the model to make sure it has the right level of the complexity, but at the same time, it has the right level of accuracy as well.

So let's see how this works in practice. So this is the CAM image of the part where we superimpose the defects, the predicted defects. And you can see in this simulation that those red lines or yellow lines indicate where the defect might happen.

Clearly, on the rail path, you will not have as many defects. But when you do have it, this is the point to go to. This is the point where the quality inspector may really want to look at the part to see if it's good or not as opposed to having the need to inspect everything, every single millimeter of the part. So I do not want to go too deep into the technique.

This is neutral neural network analysis with the correlation between the predicted data and the measured data to train the model. What was interesting learning for us is that each machine and each geometry has a separate machine learning signature, and it needs to be trained. And another learning, as I mentioned earlier, machine accuracy does not always increase with a nodal count of the neural network.

Okay, so that was example of the zero-defect manufacturing. Okay, you need to go to the next slide. So let's now have a look at example in the development. So this is where the development engineers, the application engineers, use their process knowledge and their machine learning techniques to develop the customized application.

Why customized application? Because very often, the standard out-of-the-box solutions are no longer good enough for the end user, and they want to push us to go deeper and more specialized to their field, to their special needs. Again, example from the EDM—what you saw earlier is the YEDM machine.

EDM can also exist in a little bit different application when you use thin electrodes to drill holes. Why you need to drill holes? For example, in the air range—and the temperatures can exceed of—a gas temperature can exceed 1,200 degrees or even higher.

And typically the [INAUDIBLE] material, the nickel alloys, they start to degrade their performance at the temperatures of 800 or 850 or above. So the solution is to cool the engine blades, their engine blades. And this is done by drilling the cooling holes. EDM drilling is the unique solution for hard metals. So it is highly applicable in this case.

But now we do the simple sums—250 cooling holes multiplied by 40 blades in the single stage multiplied by eight stages multiplied by several thousand engine produced per year. And you have tens of millions or hundreds of millions of holes to do. Time to produce a single hole is around 10 seconds, 5 to 10 seconds. Means that every single second counts.

Now we can go fast, but we don't want lose the track of the quality. EDM process is complex in that there are over 100 variables to control. Not all of them are equally important. But even if you drill this down to 15, 10, 5, 10, 15 variables, they really must be maintained in the optimum.

And finding the optimum is a really, really, really big challenge. Why so? Because the process interdependent. There are local minima. There is a process noise. The applications have to be material specific.

And finally, look at this. Spark is really stochastic. You never know when it will occur. You cannot predict whether it's here or over there or over here. So you have to deal with this in a statistical way, in a statistical format.

What do you want to achieve is the result like this. And if you're not careful, you can get that. Means that the spark concentration was in the wrong place and it created the bulge that is clearly undesirable.

So the process must be optimized to maximize cutting speed without loss of quality. What do we do? I think this is an example of the combination of the expert knowledge of the—field expert knowledge, which is our expert engineer or application engineer or operator, even, combined with the what we call application champion or application expert, who can tune the machine learning input and channel this with the fusion rules to produce the optimum parameter set for the machine. So we use the technique which we call stochastic optimization algorithm to find the process minima.

A short demonstration what it means—so what you see here on the left is the set of parameters that we want to control. And what you see on the right is the set of outputs that we want to achieve. The first one is the material removal rate. The second one is the time to drill the hole, and the third output is the electrode wear. Clearly, you want to maximize the first one, and you want to minimize the other two. So let us see what happens.

So we start the simulation, and we allow the machine learning to look at the output result and to tune its behavior to make sure that you get to the optimum characteristics of those three output parameters. The interesting conclusion from us—we have the experts. We have the best people in the industry how to tune the machine.

The machine learning algorithm, they can do better. In every time and every iteration, we found the improvement of 10% to 15% not because our experts are not good enough, but because the complexity of the process is such that it is becoming more and more difficult to find the right set of parameters simply based on the experience. Okay, so let's have a look at another example. And this time, we'll be looking at the maintenance.

Okay, so we heard earlier today about the residual useful lifetime prediction. This is important because when you are a user, when you bought a nice and beautiful machine, you don't want to spend time unexpectedly when the machine breaks down. You want to know when this machine needs to be maintained and when it needs to be put out of service in a predicted—in a programmed way. So our users, our customers, they optimize their workflow planning to an extent that they can provide, guarantee their short delivery time to their own users, to their own customers.

What are the users' expectations? First of all, they want to know in advance when the machine needs to be maintained. And second, they don't necessarily want to pay more for this than necessary. You know, it's like with your car.

When you bring the car to the garage because it indicated is 20,000 kilometers, you know, the back of your mind, you have the thought, you know, really, do I really need to do this now, or can I drive for another 10,000? Same for us.

The customer said, do I really need to maintain now the machine, or can I wait for another, I don't know, one week, one month, whatever? It's not easy to give the answer, neither for the car nor for our machine tools.

Why? Because there is no automatic maintenance sanitation. Machine will not tell you or the car will not tell you when it's about to break down unless you help it, yeah? The other thing is that the data we can get, they are available, of course. But they have a huge amount of this data.

One CNC machine generates a couple of terabytes of data per month or even more if you're equipped with additional sensors. And the data, they come from different sources, which are heterogeneous. So means that connecting this information is not that straightforward. You heard earlier today that the time synchronization between different channel inputs is not an easy task. And I said intervention ahead of time increased costs unnecessarily and not only to the customer but also to the supplier, to the provider like us, because very often, the cost of the maintenance is built into the cost of product these days.

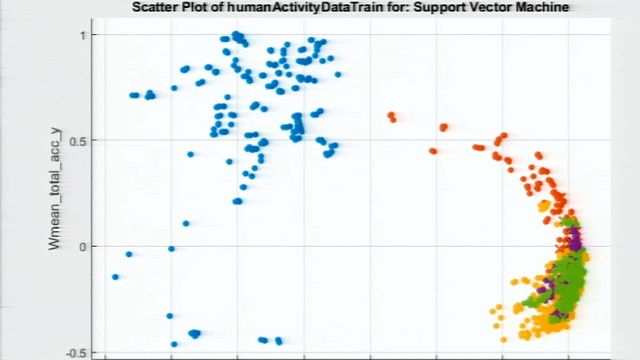

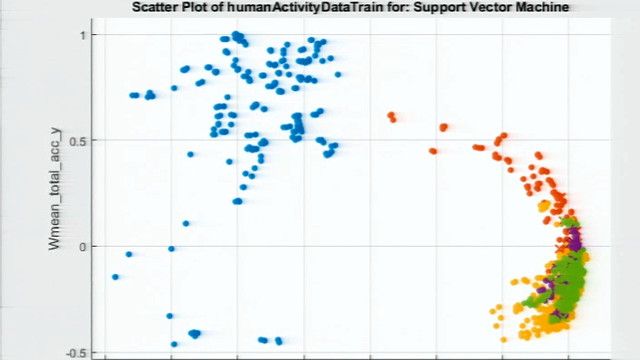

So what was our approach? We used a hybrid condition monitoring technique where we combine the supervised learning and unsupervised learning to look at the estimation to estimate the residual useful lifetime and to predict the unexpected failure, right? So both run concurrently to provide the user with the information about when to intervene and to give him the amount of time that he has left before the intervention becomes mandatory.

How do we use this? We apply the Gaussian model, adaptive Gaussian model, to visualize deviation from normal. So first of all, we convert symmetrical Gaussian bell curve to probabilistic mixture model distribution.

And this is the work we did together with colleagues from ETFL. Then we applied the predictive maintenance algorithm by clustering data and providing the training data set. And then finally, we calculated residual useful lifetime to derive the distance residual from regression calculated mean as a failure probability measure. A bit of a mouthful here, but basically, what it means is that the further away—this curve is an indication of where the failure might occur. And the closer to the curve you are, the more likely you are to have this failure.

So let's see an example of how this runs with the dashboard. This is what we provide to our customer. This is the case not for the EDM this time. We're looking at the milling machine example where we need to tell the user to help user to understand how long machine will last before it needs to be maintained.

In this case, we have the 639 days, for example, which is good enough. Machine can operate as normal. Then at some point, the set of parameters will indicate to the algorithm that probability of failure is increasing, but machine can still continue to operate. Later on, the probability of failure in our estimation is 100%.

So we really advise the user, now it's the time to maintain the machine. And we continue to monitor the parameters. And once everything has been put back in order, the machine availability can return back to normal.

So the last example I want to give is about the built-in metrology. Why built-in metrology? Because in some cases, the operations or the application or the machine in production has to be iterative or interactive, if you want.

So you produce the result that you're not 100% sure if it's good enough yet or you need to wait a little bit more. It's like in the kitchen, you know? Like, you're cooking your dinner. You need really to taste it sometimes until you know that it's good or it's not good. So this is where the built-in metrology becomes helpful.

I give an example in the area, in the domain, of the functional services, one of Mother Nature's Magic. What is the functional surface about? Have a look at this video.

And while you're looking at this, which is actually explaining the hydrophobic surfaces, the functional surfaces which is repelling the liquid—and while you're looking at this nice video, I can give a few comments about the applications. Applications are in the self-cleaning for the automotive turbine jet domestic appliances, in the anti-microbial in medical applications, in the anti-icing applications, again, to the aerospace and other industries, and then the anti-adhesion. For example, you don't want the part to stick together too much. For example, in mold, you want the plastic to easily separate from the mold part, from the molding machine.

And the way to measure these characteristics are typically done by measuring roughness. Roughness is array. Basically, it's peak-to-peak distance between the troughs and the peaks on the part.

The problem is that as you can see here, roughness is not giving all the data that you need to find because in case on the left, you have the standard roughness, the standard component which is not functional surface—it will not be hydrophobic. It will not be water repellent. And on the right, it is a functional surface.

Array is the same. Peak-to-peak distance is the same. But you can look at the profile. You can look at the micro image of the surfaces, and you can see that it's clearly different. And then behavior, of course, will be different.

So if we have the built-in metrology, we can detect the defects. In addition to identifying the surface, we can defer the tag the defect and hopefully correct them whilst the part is still in place. We can optimize the whole process using the self-learning algorithms and automation as we have seen earlier on. And of course, another added value here is the built-in metrology when you can measure the dimensional accuracy of the part and correct this also if necessary.

So in situ characterization is required to achieve the desired properties. How do we do this? We have a tool that is called surface interpreter.

We take the—first of all, we prepare the image training set. Then we use the image processing algorithms. And then we can classify the results using the regressive or classifying technique. Regressive derives surface roughness using the training data and classify and estimate which type of technology was used to produce this—either the functional surface technology or the conventional surface technology.

So image analysis uses convolutional neural networks to recognize fingerprints. And here is an example of how this is working. So on the left, you have the input image. And the system, using the machine learning analytics, would estimate the array value, giving the predicted results for these three examples.

And at the same time, it will also estimate whether this is a functional surface like here or if it's a conventional standard technology that was used to produce this particular part. So this can be done using the edge PC. In fact, it is done using the edge PC so that the user can run all those applications on his own site without need to recur to the cloud. But if he recurs to the cloud, then we can enhance the algorithm, and he can benefit from the better accuracy as well.

So that was the last example. And I would like just to say a few words in conclusion about where we are now and what would be the next steps. So we saw that the development, the artificial intelligence, is working in all elements of the process chain of development, operations, and maintenance.

So for the development, we apply machine learning for fast track technology development as we saw with the drilling machine. For the operations, we can assist users to ensure the zero-defect manufacturing, provide the flexibility and operational effectiveness, defect recognition, and so on. And in the maintenance, we can predict the residual useful lifetime and ensure that service can be done just in time—not too late, not too early, but just in time to maintain the machine availability.

Finally, a rhetorical question: Can we afford not to AI? Okay, I think the investors already gave us the answer. But just looking back in the industry fundamentals, so accelerating customer demands for application-specific solutions—as I said, standard is not good enough.

You need to be specialized. You need to be specific. Each customer has his own requirements. And in order to scale up, we cannot continue to work as usual.

Manufacturing value chains grow in complexity and need to be adaptive. There's a continuous drive to improve the performance productivity—continuous request to do better, faster, smoother, and so on without costly and error-prone human intervention. Humans—don't get me wrong. We will always need the experts.

But the field of application of expert is changing. Now they're becoming like a conductor of the orchestra, to tune, to make sure that every element of the machine learning process is acting at the right time at the right place with the right force. They're no longer just the expert that has all the necessary information, but they know which strings to pull at which moment of time.

Last but not the least, we have to watch out what's happening in Asia. And here you see the graph that in terms of the AI-related patent application, China is speeding up. They're five times applications, patent applications as many in the United States.

And here we look at the combined data between artificial intelligence and deep learning, which is pulled up together. So essentially, the choice for the AI is already made. It is self-enabling, and it is accelerating.

And I would like to conclude my talk with this question. In fact, I will not be able to answer what comes next, but I can tell you what is already now. We are in the middle of the 4.0, but the talk is industry 5.0, which is all about the artificial intelligence and machine learning. Thank you for your attention.