Design Lidar SLAM Algorithm Using Unreal Engine Simulation Environment

This example shows how to record synthetic lidar sensor data from a 3-D simulation environment, and develop a simultaneous localization and mapping (SLAM) algorithm using the recorded data. The simulation environment uses the Unreal Engine® by Epic Games®.

Introduction

Automated Driving Toolbox™ integrates an Unreal Engine simulation environment in Simulink®. Simulink blocks related to this simulation environment can be found in the drivingsim3d library. These blocks provide the ability to:

Select different scenes in the 3D simulation environment

Place and move vehicles in the scene

Attach and configure sensors on the vehicles

Simulate sensor data based on the environment around the vehicle

This powerful simulation tool can be used to supplement real data when developing, testing, and verifying the performance of automated driving algorithms, making it possible to test scenarios that are difficult to reproduce in the real world.

In this example, you evaluate a lidar perception algorithm using synthetic lidar data generated from the simulation environment. The example walks you through the following steps:

Record and visualize synthetic lidar sensor data from the simulation environment.

Develop a perception algorithm to build a map using SLAM in MATLAB®.

Set Up Scenario in Simulation Environment

First, set up a scenario in the simulation environment that can be used to test the perception algorithm. Use a scene depicting a typical city block with a single vehicle that is the vehicle under test. You can use this scene to test the performance of the algorithm in an urban road setting.

Next, select a trajectory for the vehicle to follow in the scene. The Select Waypoints for Unreal Engine Simulation (Automated Driving Toolbox) example describes how to interactively select a sequence of waypoints from a scene and generate a vehicle trajectory. This example uses a recorded drive segment obtained using the helperSelectSceneWaypoints function, as described in the waypoint selection example.

% Load reference path for recorded drive segment xData = load('refPosesX.mat'); yData = load('refPosesY.mat'); yawData = load('refPosesT.mat'); % Set up workspace variables used by model refPosesX = xData.refPosesX; refPosesY = yData.refPosesY; refPosesT = yawData.refPosesT; % Display path on scene image sceneName = 'USCityBlock'; hScene = figure; helperShowSceneImage(sceneName); hold on scatter(refPosesX(:,2),refPosesY(:,2),7,'filled') % Adjust axes limits xlim([-150 100]) ylim([-125 75])

The LidarSLAMIn3DSimulation Simulink model is configured with the US City Block (Automated Driving Toolbox) scene using the Simulation 3D Scene Configuration (Automated Driving Toolbox) block. The model places a vehicle on the scene using the Simulation 3D Vehicle with Ground Following (Automated Driving Toolbox) block. A lidar sensor is attached to the vehicle using the Simulation 3D Lidar (Automated Driving Toolbox) block. In the block dialog box, use the Mounting tab to adjust the placement of the sensor. Use the Parameters tab to configure properties of the sensor to simulate different lidar sensors. In this example, the lidar is mounted on the center of the roof. The lidar sensor is configured to model a typical Velodyne® HDL-32E sensor.

close(hScene) if ismac error(['3D Simulation is supported only on Microsoft' char(174) ' Windows' char(174) ' and Linux' char(174) '.']); end % Open the model modelName = 'LidarSLAMIn3DSimulation'; open_system(modelName); snapnow;

The model records and visualizes the synthetic lidar data. The recorded data is available through the simulation output, and can be used for prototyping your algorithm in MATLAB. Additionally, the model uses a From Workspace (Simulink) block to load simulated measurements from an Inertial Navigation Sensor (INS). The INS data was obtained by using an insSensor (Automated Driving Toolbox) object, and saved in a MAT file.

The rest of the example follows these steps:

Simulate the model to record synthetic lidar data generated by the sensor and save it to the workspace.

Use the sensor data saved to the workspace to develop a perception algorithm in MATLAB. The perception algorithm builds a map of the surroundings using SLAM.

Visualize the results of the built map.

Record and Visualize Synthetic Lidar Sensor Data

The Record and Visualize subsystem records the synthetic lidar data to the workspace using a To Workspace (Simulink) block. The Visualize Point Cloud MATLAB Function block uses a pcplayer object to visualize the streaming point clouds. The Visualize INS Path MATLAB Function block visualizes the streaming INS data.

Simulate the model. The streaming point cloud display shows the synthetic lidar sensor data. The scene display shows the synthetic INS sensor data. Once the model has completed simulation, the simOut variable holds a structure with variables written to the workspace. The helperGetPointCloud function extracts the sensor data into an array of pointCloud objects. The pointCloud object is the fundamental data structure used to hold lidar data and perform point cloud processing in MATLAB. Additionally, INS data is loaded from a MAT file, which will later be used to develop the perception algorithm. The INS data was obtained using the insSensor (Automated Driving Toolbox) object. The INS data has been processed to contain [x, y, theta] poses in world coordinates.

% Update simulation stop time to end when reference path is completed simStopTime = refPosesX(end,1); set_param(gcs,'StopTime',num2str(simStopTime)); % Load INS data from MAT file data = load('insMeasurement.mat'); insData = data.insMeasurement.signals.values; % Run the simulation simOut = sim(modelName); % Create a pointCloud array from the recorded data ptCloudArr = helperGetPointCloud(simOut);

Use Recorded Data to Develop Perception Algorithm

The synthetic lidar sensor data can be used to develop, experiment with, and verify a perception algorithm in different scenarios. This example uses an algorithm to build a 3-D map of the environment from streaming lidar data. Such an algorithm is a building block for applications like localization. It can also be used to create high-definition (HD) maps for geographic regions that can then be used for online localization. The map building algorithm is encapsulated in the helperLidarMapBuilder class. This class uses point cloud and lidar processing capabilities in MATLAB. For more details, see Process Point Clouds.

The helperLidarMapBuilder class takes incoming point clouds from a lidar sensor and progressively builds a map using the following steps:

Preprocess point cloud: Downsamples each incoming point cloud. To improve accuracy and efficiency of registration,

pcdownsampleis used to downsample the point cloud prior to registration.Register point clouds: Register the incoming point cloud with the last point cloud using the Generalized Iterative Closest Point (G-ICP) registration algorithm. The

pcregistericpfunction performs the registration. An initial transformation estimate can substantially improve registration performance. In this example, INS measurements are used to accomplish this.Align point clouds: Use the estimated transformation obtained from registration to transform the incoming point cloud to the frame of reference of the map.

Update view set: Add the incoming point cloud and the estimated absolute pose as a view in a

pcviewsetobject. Add a connection between the current and previous view with the relative transformation between them.

The updateMap method of the helperLidarMapBuilder class accomplishes these steps. The helperEstimateRelativeTransformationFromINS function computes an initial estimate for registration from simulated INS sensor readings.

Such an algorithm is susceptible to drift while accumulating a map over long sequences. To reduce the drift, it is typical to detect loop closures and use graph SLAM to correct the drift. The configureLoopDetector method of the helperLidarMapBuilder class configures loop closure detection. Once it is configured, loop closure detection takes place each time updateMap is invoked, using the following functions and classes:

pcviewset: Manages data associated with point cloud odometry like point clouds, poses and connections.scanContextDescriptor: Extracts scan context descriptors from each incoming point cloud. Scan context is a 2-D global feature descriptor that is used for loop closure detection.scanContextLoopDetector: Manages scan context descriptors and detects loop closures. It usesscanContextDistanceto compute the distance between scan context descriptors and select the closest feature matches.

Then, the example uses point cloud registration to accept or reject loop closure candidates and to find the loop closure transformation.

% Set the random seed for example reproducibility rng(0); % Create a lidar map builder mapBuilder = helperLidarMapBuilder('ROICylinderRadius',85,'DownsampleGridStep',0.25,'Verbose',true); % Configure the map builder to detect loop closures configureLoopDetector(mapBuilder,'LoopConfirmationRMSE',1, ... 'SearchRadius',0.2,'DistanceThreshold',0.2); % Loop through the point cloud array and progressively build a map skipFrames = 10; numFrames = numel(ptCloudArr); exitLoop = false; prevInsMeas = insData(1,:); for n = 1:skipFrames:numFrames insMeas = insData(n,:); % Estimate initial transformation using INS initTform = helperEstimateRelativeTransformationFromINS(insMeas,prevInsMeas); % Update map with new lidar frame updateMap(mapBuilder,ptCloudArr(n),initTform); % Update top-view display isDisplayOpen = updateDisplay(mapBuilder,exitLoop); % Check and exit if needed exitLoop = ~isDisplayOpen; prevInsMeas = insMeas; end

Loop closure candidate found between view Id 45 and 3 with RMSE 4.319034... Rejected Loop closure candidate found between view Id 105 and 2 with RMSE 0.619329... Accepted Loop closure candidate found between view Id 106 and 3 with RMSE 0.617457... Accepted Loop closure candidate found between view Id 107 and 4 with RMSE 0.751743... Accepted

snapnow;

% Close display

closeDisplay = true;

updateDisplay(mapBuilder,closeDisplay);The accumulated drift progressively increases over time.

Once sufficient loop closures are detected, the accumulated drift can be corrected using pose graph optimization. This is accomplished by the optimizeMapPoses method of the helperLidarMapBuilder class, which uses createPoseGraph to create a pose graph and optimizePoseGraph (Navigation Toolbox) to optimize the pose graph.

After the pose graph has been optimized, rebuild the map using the updated poses. This is accomplished by the rebuildMap method of helperLidarMapBuilder using pcalign.

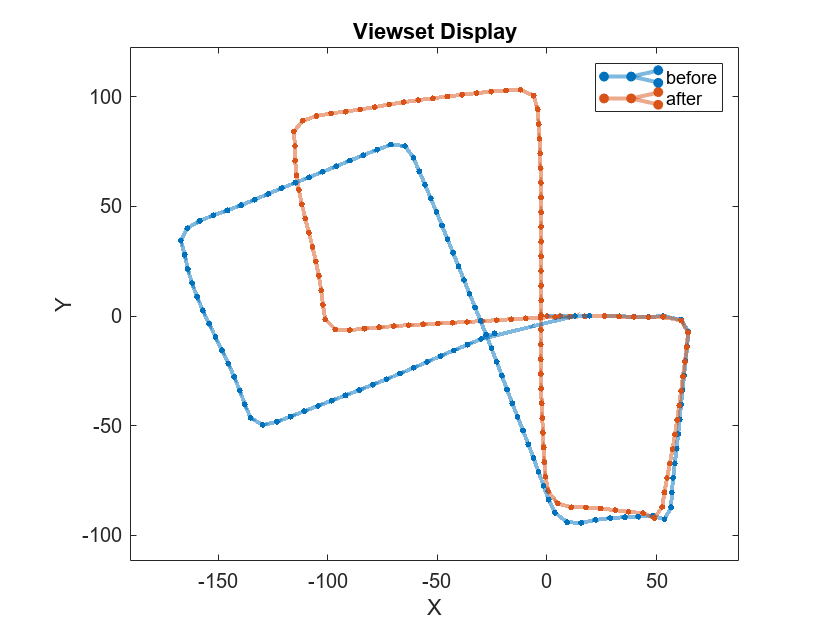

Use optimizeMapPoses and rebuildMap to correct for the drift and rebuild the map. Visualize the view set before and after pose graph optimization.

% Visualize viewset before pose graph optimization hFigViewset = figure; hG = plot(mapBuilder.ViewSet); view(hG.Parent,2); title('Viewset Display') % Get the estimated trajectory before pose graph optimization to evaluate % its accuracy estimatedTrajectoryBefore = vertcat(mapBuilder.ViewSet.Views.AbsolutePose.Translation); % Optimize pose graph and rebuild map optimizeMapPoses(mapBuilder);

Optimizing pose graph...done

rebuildMap(mapBuilder);

Rebuilding map...done

% Get the estimated trajectory after pose graph optimization to evaluate % its accuracy estimatedTrajectoryAfter = vertcat(mapBuilder.ViewSet.Views.AbsolutePose.Translation); % Overlay viewset after pose graph optimization hold(hG.Parent,'on'); plot(mapBuilder.ViewSet); hold(hG.Parent,'off'); legend(hG.Parent,'before','after')

To evaluate the accuracy of the built map, compute the root-mean-square error (rmse) between the estimated trajectory and the ground truth trajectory before and after pose graph optimization.

groundTruthTrajectory = squeeze(simOut.lidarLocation.signals.values)'; selectedGroundTruth = groundTruthTrajectory(1:skipFrames:numFrames,:); % Apply an offset since the estimated trajectory starts at [0 0 0] with an % angular offset of 90 degrees in the z-axis. offsetTform = rigidtform3d([0 0 90],selectedGroundTruth(1,:)); selectedGroundTruth = transformPointsInverse(offsetTform,selectedGroundTruth); rmseBefore = rmse(selectedGroundTruth,estimatedTrajectoryBefore,"all"); disp(['rmse before pose graph optimization: ' num2str(rmseBefore)])

rmse before pose graph optimization: 2.8135

rmseAfter = rmse(selectedGroundTruth,estimatedTrajectoryAfter,"all"); disp(['rmse after pose graph optimization: ' num2str(rmseAfter)])

rmse after pose graph optimization: 1.0812

Visualize the accumulated point cloud map computed using the recorded data.

close(hFigViewset) hFigMap = figure; pcshow(mapBuilder.Map) % Customize axes labels and title xlabel('X (m)') ylabel('Y (m)') zlabel('Z (m)') title('Point Cloud Map')

helperMakeFigurePublishFriendly(hFigMap);

By changing the scene, placing more vehicles in the scene, or updating the sensor mounting and parameters, the perception algorithm can be stress-tested under different scenarios. This approach can be used to increase coverage for scenarios that are difficult to reproduce in the real world.

% Close windows

close(hFigMap)

close_system(modelName)Supporting Functions

helperGetPointCloud Extract an array of pointCloud objects.

function ptCloudArr = helperGetPointCloud(simOut) % Extract signal ptCloudData = simOut.ptCloudData.signals.values; % Create a pointCloud array ptCloudArr = pointCloud(ptCloudData(:,:,:,1)); for n = 2 : size(ptCloudData,4) ptCloudArr(end+1) = pointCloud(ptCloudData(:,:,:,n)); %#ok<AGROW> end end

helperMakeFigurePublishFriendly Adjust figure so that screenshot captured by publish is correct.

function helperMakeFigurePublishFriendly(hFig) if ~isempty(hFig) && isvalid(hFig) hFig.HandleVisibility = 'callback'; end end

Additional supporting functions or classes used in the example are included below.

helperLidarMapBuilder progressively builds a lidar map using point cloud scans. Each point cloud is downsampled, and registered against the previous point cloud. A point cloud map is then progressively built by aligning and merging the point clouds.

helperEstimateRelativeTransformationFromINS estimates a relative transformation from INS data.

helperShowSceneImage displays top-view image of the Unreal scene.

helperUpdatePolyline updates a polyline position used in conjunction with helperShowSceneImage.